Aperio

One brain. Every AI agent. Nothing forgotten. — Self-hosted memory layer via MCP + Postgres + pgvector

Ask AI about Aperio

Powered by Claude · Grounded in docs

I know everything about Aperio. Ask me about installation, configuration, usage, or troubleshooting.

0/500

Reviews

Documentation

✨ Aperio

One brain. Every agent. Nothing forgotten.

A self-hosted personal memory layer for AI agents. Docker + Postgres + pgvector + MCP + Ollama.

Your context, always available.

• Getting Started • Architecture • Philosophy • AI Providers • How To Use? • Privacy • Security • Design Decisions •

• 🌐 Site: https://baiganio.github.io/aperio •

💡 Pro Tip: Visit the Aperio Wiki or Discussions for extensive documentation on advanced topics.

🔍 Explore more: Early Testing Contributors • FAQ • Troubleshooting

🏗️ (Quick) Project Structure

📂 aperio/ <---= You are here if You are Developer. He-he ;/

├── 📂 db/

│ ├── index.js # Store factory — auto-selects Postgres or LanceDB

│ ├── lancedb.js # LanceDB adapter (no Docker needed)

│ ├── postgres.js # Postgres + pgvector adapter

│ ├── types.js # Shared DB types

│ └── 📂 migrations/ # 001_init · 002_pgvector

├── 📂 docker/

│ └── docker-compose.yml # pgvector/pgvector:pg16

├── 📂 docs/

│ └── index.html # Landing page for GitHub Pages

├── 📂 id/

│ └── whoami.md # Instructions for AI agent identity (edit this!)

├── 📂 lib/

│ ├── agent.js # Agent core — Anthropic / DeepSeek / Ollama loops

│ ├── terminal.js # Terminal chat client

│ ├── 📂 emitters/ # CLI and WebSocket stream emitters

│ ├── 📂 handlers/ # Attachment and memory handlers

│ ├── 📂 helpers/ # Embeddings, logger, port, shutdown, Ollama health

│ ├── 📂 routes/ # Express API routes + path safety guards

│ ├── 📂 utils/ # Chat utilities

│ └── 📂 workers/ # Deduplication, reasoning adapters, skill loader

├── 📂 mcp/

│ ├── index.js # MCP server entry point

│ └── 📂 tools/

│ ├── memory.js # remember · recall · update_memory · forget · backfill_embeddings · deduplicate_memories

│ ├── files.js # read_file · write_file · append_file · scan_project

│ ├── web.js # fetch_url

│ └── image.js # read_image · preprocess_image

├── 📂 public/

│ └── index.html # Web UI — themes, streaming, sidebar

├── 📂 skills/ # Memory, reasoning, tools, coding standards, etc.

├── 📂 tests/

├── .env.example # Pre-set quick configuration

├── package.json # Dependencies

└── server.js # Express + WebSocket + agent loop

💡 Tip:

whoami.mdcontrols the identity of the AI agent.

- It is the most impactful file to customize.

Getting Started

Prerequisites

- Node.js 18+ — download from https://nodejs.org/en/download

- Docker Desktop — (optional, for Postgres mode)

- Ollama — download from https://ollama.com/download (optional, for local AI)

- Anthropic API key — (optional, for cloud AI)

- DeepSeek API key — (optional, for cloud AI)

- Voyage AI API key — (optional, for cloud embeddings)

Step 1. Clone & Configure Environment Variables

Dedicated dev branch stripped from the file/folder noise. Only what's needed.

# dedicated developer branch - no extra files

git clone --depth 1 -b dev https://github.com/BaiGanio/aperio.git

cd aperio

# restore dependencies

npm install

Ready to use

.env.examplefor a fully local setup:

# cp .env.example .env

DATABASE_URL=postgresql://aperio:aperio_secret@localhost:5432/aperio

AI_PROVIDER=ollama

OLLAMA_MODEL=qwen2.5:3b

EMBEDDING_PROVIDER=transformers # fully local, no API key required

Step 2. Databases & Migrations

Aperio supports two vector store backends — pick the one that fits your setup:

| Backend | When to use | Requires |

|---|---|---|

| LanceDB (default) | No Docker, quick start, single user | Nothing extra |

| Postgres + pgvector | Multi-agent, persistent, production-like | Docker |

# LanceDB is the default — no extra steps needed.

# Skip the Docker commands below and go directly to Step 3.

💡 Tip: Set

DB_BACKEND=lancedbin.envto force LanceDB, orDB_BACKEND=postgresfor Postgres.

If not set, Aperio auto-detects: uses Postgres when Docker is running, LanceDB otherwise.

# POSTGRES MODE — start the database and run migrations

cd docker && docker compose up -d && cd ..

- MacOS/Linux

docker exec -i aperio_db psql -U aperio -d aperio < db/migrations/001_init.sql

docker exec -i aperio_db psql -U aperio -d aperio < db/migrations/002_pgvector.sql

- Windows

cmd /c "docker exec -i aperio_db psql -U aperio -d aperio < db/migrations/001_init.sql"

cmd /c "docker exec -i aperio_db psql -U aperio -d aperio < db/migrations/002_pgvector.sql"

Step 3. Install Ollama & Pull Models

💡 Tip: Skip this step entirely if you are using Anthropic or DeepSeek as your

AI_PROVIDER.

ollama serve # use separate terminal

ollama pull qwen2.5:3b # LLM — lightweight, fast, good tool-calling

# ollama pull llama3.1 # LLM — solid tool-calling, no reasoning

# ollama pull qwen3:4b # LLM — strong reasoning, thinking mode support

Step 4. Start Aperio Web UI

npm run start:local # localhost:31337 → browser opens automatically

Step 5. Start Aperio terminal chat

npm run chat:local # runs as proxy or standalone

That's it. No API keys. No cloud. Full semantic memory on your machine.

Q: Now what?

💡 Stuck on the installation steps? — check Troubleshooting wiki.

💡 Check Aperio MCP Tools Guide wiki for extended examples.

💡 Check Commands wiki for the available options to run the app.

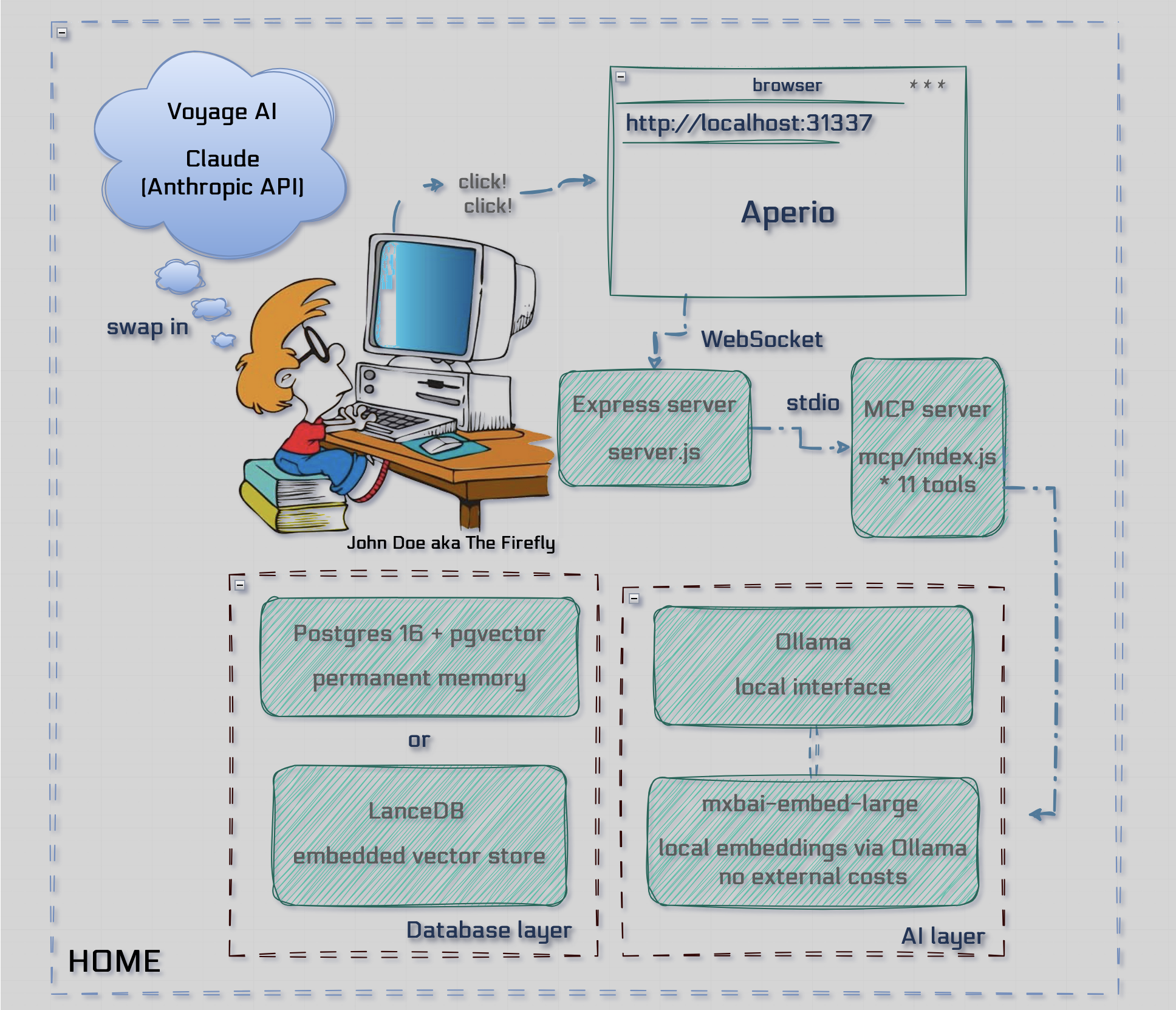

Architecture

Q: Feel a need to read?

💡 Tip: Visit Architecture & Design for in-depth explanations.

MCP Tools

Aperio exposes 12 tools over MCP. Any MCP-compatible agent (Cursor, Windsurf, Claude, etc.) can call them.

| Category | Tool | What it does |

|---|---|---|

| Memory | remember | Save a memory with type, title, tags, importance, and optional expiry |

recall | Semantic or full-text search across all memories | |

update_memory | Update an existing memory by ID; re-generates its embedding | |

forget | Delete a memory by ID | |

backfill_embeddings | Generate embeddings for memories that are missing one | |

deduplicate_memories | Find and merge near-duplicate memories by cosine similarity | |

| Files | read_file | Read a code or text file (max 500 lines per call, paginated via offset) |

write_file | Create or overwrite a file (subject to write-path guard) | |

append_file | Append content to an existing file without touching the rest | |

scan_project | Traverse a project folder — returns a file tree and reads key files | |

| Web | fetch_url | Fetch a URL, strip HTML, truncate at 15 000 characters |

| Image | read_image | Load an image (file path or base64) for the agent to analyse |

preprocess_image | Normalise an image to RGB PNG before sending to a local VLM (strips alpha, letterboxes to 896×896) |

💡 Tip: Check Aperio MCP Tools Guide for call examples.

Philosophy

Aperio is open source and self-hosted because your memories is yours.

- It runs entirely on your machine - no API keys, no data leaving your network, no cloud dependency.

- Default is local and private. The option - self-hosted. The price - free forever.

- Cloud AI is available as a power upgrade, but you will be never forced to use it.

| 🔒 Local by default | ☁️ Cloud as upgrade |

| Ollama + local embeddings — zero external calls | Claude / DeepSeek for deep research & heavy tasks |

| 🗄️ Your brain, your data | 🖥️ MCP-native |

| Postgres or LanceDB lives on your machine. You own it. | Any MCP agent plugs in — Cursor, Windsurf, etc. |

| ✅ Free to run |

| No subscription. No per-message cost. Just your hardware. |

‼️ What Aperio Is Not!

| 🚫 Not a cloud service | 🚫 Not a managed product |

| No hosted version, no SaaS, no managed infra | No support contracts, SLAs, or guaranteed uptime |

| 🚫 Not a plugin or extension | 🚫 Not a replacement for your AI |

| It's a self-hosted server you run yourself | A memory layer alongside Claude, Cursor, etc. |

| 🚫 Not plug-and-play | 🚫 Not production-hardened |

| Needs Node.js, Docker, and basic terminal comfort | Early software, built in the open, improving fast |

AI Providers

Switch with a single line in .env. Everything else — memories, tools, UI — stays identical.

AI_PROVIDER=ollama # "ollama" | "anthropic" | "deepseek"

⬡ Ollama (Default — Local, Free, Private)

No API keys, no data leaving your machine.

AI_PROVIDER=ollama

OLLAMA_MODEL=qwen2.5:3b

OLLAMA_BASE_URL=http://localhost:11434

Recommended models (pull with ollama pull <model>):

| Model | Best for |

|---|---|

qwen2.5:3b | Default — lightweight, fast, good tool-calling |

llama3.1 | Solid tool-calling, no thinking/reasoning overhead |

qwen3:4b | Strong reasoning, thinking mode |

deepseek-r1:32b | Heavy reasoning, requires ≥ 60 GB RAM |

💡 Tip: Set

CHECK_RAM=truein.envto let Aperio auto-select a model based on available RAM.

✦ Anthropic Claude (Optional — Cloud Upgrade)

For heavy research, complex multi-step reasoning, or the strongest tool-calling available.

AI_PROVIDER=anthropic

ANTHROPIC_API_KEY=sk-ant-...

ANTHROPIC_MODEL=claude-haiku-4-5-20251001

Available models (set via ANTHROPIC_MODEL):

| Model | Notes |

|---|---|

claude-haiku-4-5-20251001 | Fast and cost-efficient — good default |

claude-sonnet-4-6 | Balanced performance and cost |

claude-opus-4-7 | Most capable, highest cost |

◈ DeepSeek (Optional — Cloud Upgrade)

Cost-effective cloud alternative with strong reasoning capabilities.

AI_PROVIDER=deepseek

DEEPSEEK_API_KEY=sk-...

DEEPSEEK_MODEL=deepseek-chat

Sign up at platform.deepseek.com. No vision support — image tools are disabled in DeepSeek mode.

Embeddings

Embeddings power semantic search across your memories. Aperio supports two providers:

EMBEDDING_PROVIDER=transformers # "transformers" | "voyage"

HuggingFace Transformers (Default — Fully Local)

Downloads mixedbread-ai/mxbai-embed-large-v1 (ONNX, quantized) on first run. No daemon, no API key, no network calls after the initial download.

EMBEDDING_PROVIDER=transformers

Voyage AI (Optional — Cloud)

Higher-quality embeddings, free tier: 50M tokens/month.

EMBEDDING_PROVIDER=voyage

VOYAGE_API_KEY=pa-...

Sign up at dash.voyageai.com.

Q: Is that all?

💡 Tip: Check out our wiki pages AI Agents Comparison & Embeddings for more details.

Privacy

Reading Files with Local AI

Ollama itself has no file system access — it's purely an inference engine. Aperio's MCP layer bridges the gap.

When you ask the AI to read a file, here's what actually happens:

You → "read /path/to/server.js and explain the WebSocket handler"

MCP Server → calls read_file tool, loads the file from disk

Ollama → receives the file contents as context, reasons over it

You ← answer based on your actual code

The model never touches your file system directly. Aperio reads the file and injects the content into the conversation.

Q: You call this privacy?

💡 Check out our wiki page MPC Tools for more details.

Security

Aperio runs on your machine and has access to your file system through the scan_project, write_file, append_file, and read_file tools. File operations are gated by a path safety system — read and write access are controlled independently.

File System Access

All file operations go through lib/routes/paths.js, which resolves and validates every path before it reaches the disk.

Two environment variables control what is accessible:

# Allow read operations only inside these directories (comma-separated absolute paths)

APERIO_ALLOWED_PATHS_TO_READ=/Users/yourname/projects,/Users/yourname/documents

# Allow write operations only inside these directories (comma-separated absolute paths)

APERIO_ALLOWED_PATHS_TO_WRITE=/Users/yourname/projects

How path resolution works:

- Both values default to the current working directory (

process.cwd()) when not set — which is the Aperio project root when you runnpm run start:local. - Paths are resolved to absolute form at startup.

~is expanded to the working directory. - A request to read or write

/some/path/file.txtis allowed only if its resolved absolute path starts with one of the permitted directories. Paths outside the allow-list are rejected with a clear error message before any I/O occurs. - Read and write guards are separate. You can grant broad read access while keeping write access narrow — for example, read your entire

~/projectstree but only write inside the Aperio project root.

What the model can and cannot do:

| Operation | Guard | Default scope |

|---|---|---|

read_file | APERIO_ALLOWED_PATHS_TO_READ | Project root |

write_file | APERIO_ALLOWED_PATHS_TO_WRITE | Project root |

append_file | APERIO_ALLOWED_PATHS_TO_WRITE | Project root |

scan_project | APERIO_ALLOWED_PATHS_TO_READ | Project root |

Additionally, read_file enforces:

- Extension allow-list — only code and text files (

.js,.ts,.py,.md,.json,.sql,.sh, etc.) - Size cap — files larger than 500 KB are rejected

- Pagination — reads at most 500 lines per call; use the

offsetparameter to page through larger files

📄 Take a notes:

- Only run Aperio on a machine you trust

- Do not expose the MCP server or web UI to the public internet without authentication

- Review any file write operations before confirming them —

write_fileoverwrites completely with no undo - The AI model can be prompted (or hallucinate) to write to sensitive paths — always review before confirming

- Never commit your

.envfile — it contains your database URL and API keys - Write paths should be equal to or a strict subset of read paths

Q: And this is it?

💡 Check out our wiki page Path safety for more details.

One brain. Every agent. Nothing forgotten.

From Latin aperire — to open, to reveal, to bring into the light.