EdgeKnowledge Skill

AI-powered edge knowledge mining from underground forums. Deep crawls Reddit, BlackHatWorld, GreyHatMafia with browser automation, visual analysis, and structured reporting.

Ask AI about EdgeKnowledge Skill

Powered by Claude · Grounded in docs

I know everything about EdgeKnowledge Skill. Ask me about installation, configuration, usage, or troubleshooting.

0/500

Reviews

Documentation

Edge Knowledge

Chinese Documentation | English Documentation

AI-powered edge knowledge mining system. Intelligently recommends high-value forums (Reddit, BlackHatWorld, GreyHatMafia, etc.) based on user needs, performs deep crawling with browser-use, visual recognition, and analyzes edge tactics, power users, and resources, outputting a single structured Markdown report.

🎯 Core Philosophy

💡 Knowledge Equity Initiative This project encourages community contributions! You can create branches on Git to build domain-specific search expert skills (e.g., SEO Expert, Affiliate Hunter, Tool Digger), making edge knowledge accessible to more people.

🤝 How to Contribute PRs:

- 🌿 Build Domain-Specific Branches - Create specialized versions for specific domains (e.g.,

seo-expert,affiliate)- 🔧 Optimize Existing Skill - Improve tool versatility and robustness (anti-scraping strategies, error handling, performance optimization)

- 📚 Share Quality Forums - Add deep-dive forums you use to

forum_database.jsonwith search strategies- 🤖 Add Automation Capabilities - Integrate scheduled tasks, push to Notion/Feishu/Slack and other collaboration tools

Let's break information barriers and promote knowledge equity together! 🚀

What is Edge Knowledge?

In the AI era, information that LLMs can easily understand or collect from the internet is considered common knowledge. Edge knowledge refers to scarce, industry know-how, and gray/black hat techniques that reach the boundaries of platform rules.

Edge Knowledge Includes:

- ✅ Black/White/Gray Hat SEO: PBN, Parasite SEO, Cloaking, Link Farms

- ✅ Tactical Tricks: Banned, risky, but effective methods

- ✅ Underground Tools: GSA SER, SEnuke, Xrumer, Scrapebox

- ✅ Real Data: Success rates, prices, risks, case numbers

- ✅ Controversial Tactics: High-voted replies, controversial discussions, practical sharing

Does NOT Include:

- ❌ White Hat Platitudes ("write good content and traffic will come")

- ❌ Official Documentation (Google SEO Guidelines)

- ❌ Mainstream Advice ("improve user experience")

Three Characteristics of Edge Knowledge

- Freshness - Recent information (post-2025)

- Scarcity - Exclusive information (known in small circles)

- Credibility - Trustworthy information (with data and cases)

✨ Core Capabilities

V1: Foundation

- 🎯 Intent Extraction & Query Generalization - Generalizes query directions and recommends high-quality forums

- 🧠 User Preference Memory - Remembers favorite forums and search habits

- 🔐 Credential Management - Automatically manages forum credentials, supports session reuse

- 🛡️ Anti-Detection System - Fixed fingerprints, random delays, session management to reduce account risk

- 🌐 Smart Browser Crawling - Uses browser-use skill, supports screenshots + visual recognition

- 🔍 Deep Content Analysis - Edge knowledge identification, power user identification, resource extraction

- 📊 Single Report Output - Named by date + topic, structured display

🆕 V2: Hunter Mode (NEW!)

V2 adds aggressive resource acquisition capabilities:

| Feature | Description |

|---|---|

| 🎯 Value Signal Detection | 6 pattern types: Reply-unlock, Hidden content, Download links, Extract codes, Attachments, Task thresholds |

| 🤖 Auto-Reply System | 25 random templates (15 EN + 10 CN), auto language detection |

| 📦 Resource Downloader | Downloads ALL file types (.exe/.bat/.torrent), maintains completeness |

| 🔗 Deep Dive Tracking | External link follow, Author tracking, Comment section mining |

| 🧵 Tool Integration | Agent-Reach, gallery-dl, yt-dlp, Crawl4AI ready |

🚀 Quick Install

git clone https://github.com/1596941391qq/EdgeKnowledge_Skill.git

cd EdgeKnowledge_Skill

chmod +x install.sh

./install.sh

Or copy this skill to Claude Code's skills directory:

cp -r edge-knowledge ~/.claude/skills/

🔄 MCP Tool Routing (V2)

V2 includes an intelligent three-tier routing engine that automatically selects the best tool for each task — cost-first with fallback guarantees.

Tool Tiers

| Tier | Tool | Cost | Best For |

|---|---|---|---|

| Tier 1 | browser-use | Free (local Playwright) | Screenshot + visual recognition, clicks/scrolls/forms, JS lazy-load, post-login access |

| Tier 2 | agent-browser | Free (Vercel CLI) | Repetitive structured extraction, @e1/@e2 element selection, scripted multi-step operations |

| Tier 3 | google-gemini-mcp | API key (per-token) | Bypassing anti-bot blocks, batch URL analysis (>10 pages), complex multimodal understanding |

Routing Rules

IF captcha_detected AND captcha_type == "recaptcha_v2":

→ ai-captcha-bypass (GPT-4o or Gemini 2.5) → retry

IF cloudflare_blocked AND browser_use_failed:

→ google-gemini-mcp (Tier 3)

IF batch_analysis AND urls > 10:

→ google-gemini-mcp (concurrent)

IF visual_heavy AND needs_screenshot:

→ browser-use (Tier 1)

IF download_only:

→ gallery-dl / yt-dlp (bypasses browser)

MCP Server Config

All MCP server configurations are in mcp_config.json:

google-gemini-mcp— Gemini 2.5 depth search, URL fetch, multimodal analysisai-captcha-bypass— GPT-4o / Gemini-driven CAPTCHA solving (Selenium + Firefox)

⚙️ Configuration Files

1. forum_database.json

The forum knowledge base containing forum information and search strategies.

Structure:

{

"categories": {

"Q&A_Search": {

"description": "Suitable for mining deep discussions and real user feedback in comment sections",

"forums": [...]

},

"Edge_Knowledge_Search": {

"description": "Suitable for mining gray/black hat techniques not found in mainstream channels",

"forums": [...]

},

"Deep_Dive_Forums": {

"description": "Deep content that others don't know about",

"forums": [...]

}

},

"search_strategies": {

"Instagram_Growth": {

"keywords": [...],

"recommended_forums": [...],

"focus": "Real feedback in comment sections and gray techniques"

}

}

}

Usage:

- The system automatically reads this file to recommend forums

- You can add new forums or search strategies

- Each forum includes: name, URL, rating, cost, target audience, tags

2. memory.json.template

Template for user preferences and crawling history. Copy to memory.json for first use:

cp memory.json.template memory.json

Structure:

{

"userPreferences": {

"favoriteForums": ["BestBlackHatForum"],

"domains": ["SEO", "Black Hat Techniques", "Traffic Arbitrage"],

"lastUsedDomain": "Black Hat SEO"

},

"forumCredentials": {

"bestblackhatforum.com": {

"username": "",

"password": "",

"lastLogin": "",

"loginCount": 1,

"cookies": null,

"localStorage": null,

"sessionValid": true

}

},

"crawledResources": [],

"antiDetection": {

"viewport": {"width": 1920, "height": 1080},

"userAgent": "",

"timezone": "",

"locale": "",

"randomDelayRange": [5000, 30000],

"maxLoginPerDay": 3,

"reuseSession": true,

"sessionExpiryHours": 24

}

}

Fields Explanation:

userPreferences: Your favorite forums and domainsforumCredentials: Forum login credentials (auto-saved when you provide them)crawledResources: History of crawled URLs (prevents duplicate crawling)antiDetection: Anti-detection configuration (viewport, delays, login limits)

Privacy Note: memory.json is in .gitignore and won't be committed to Git. Your credentials are safe locally.

3. value_patterns.json (V2)

Value signal detection patterns for Hunter Mode.

Patterns Include:

reply_unlock- "Reply to unlock hidden content"hidden_content- "Hidden content / Spoiler blocks"download_link- Mega/Mediafire/Baidu pan linksextract_code- "Password: xxx" / "Code: xxx"attachment- "Download attachment"task_threshold- "Need X posts / X likes to view"

4. platforms.json (V2)

Platform-specific download configurations.

Structure:

{

"mega.nz": {

"tool": "gallery-dl",

"args": ["--no-mtime"],

"maxConcurrent": 2

},

"youtube.com": {

"tool": "yt-dlp",

"args": ["-f", "best"]

}

}

5. resources/ Directory (V2)

Organized resource storage:

resources/

├── downloads/ # Downloaded files (by date)

├── links/

│ ├── mega.json # Mega links index

│ ├── baidu.json # Baidu pan links

│ └── gdrive.json # Google Drive links

├── codes/

│ └── passwords.json # Extract codes

└── index.json # Unified resource index

🚀 Usage

Basic Usage

Use edge-knowledge to mine cost-effective Instagram growth services

Real-World Examples

Demonstrate the skill's edge knowledge tracking capabilities:

Example 1: Black Hat SEO Techniques

Use edge-knowledge to find the latest black hat SEO techniques for 2026

What you'll get:

- Latest PBN (Private Blog Network) strategies

- Parasite SEO tactics that still work

- Cloaking techniques to bypass Google detection

- Link farming methods and automation tools

Example 2: Affiliate Marketing Arbitrage

Use edge-knowledge to discover profitable affiliate traffic sources

What you'll get:

- Underground traffic sources with high ROI

- CPA networks that accept gray hat methods

- Media buying strategies from top affiliates

- Real case studies with actual numbers

Example 3: Social Media Growth Hacks

Use edge-knowledge to find Instagram automation tools that bypass detection

What you'll get:

- Automation bots that work in 2026

- SMM panels with real engagement

- Growth hacking scripts and techniques

- Risk assessment and detection avoidance

Example 4: Tool Discovery

Use edge-knowledge to find cracked SEO tools and automation software

What you'll get:

- Working cracks for premium SEO tools

- Automation scripts for scraping and posting

- Nulled WordPress plugins and themes

- Community reviews and safety ratings

Workflow

- Stage 1: Forum Recommendation - System recommends relevant forums based on your needs

- Stage 2: Smart Crawling - Deep crawls forum content using browser-use

- Stage 3: Content Analysis - Identifies edge knowledge, power users, resources

- Stage 4: Report Generation - Outputs structured Markdown report

🛠️ Bundled Tool Modules

The following modules are included for edge-case automation needs:

| Module | Purpose |

|---|---|

rules/access-control.md | Access control & authorization boundary definition |

mcp_config.json | Central MCP server & tool routing configuration |

cdp-scripts/ | CDP-based forum crawlers (see CDP Scripts section below) |

All tools are called automatically by the routing engine when triggered.

CDP Scripts

Browser automation crawlers using Chrome DevTools Protocol for targeted forum mining:

| Script | Target | What it does |

|---|---|---|

bhw-crawler.mjs | BlackHatWorld | Thread listing crawler |

bhw-crawler-v2.mjs | BlackHatWorld | V2 with value signal detection |

bhw-detail-crawler.mjs | BlackHatWorld | Deep thread content extraction |

bhw-backlink-scrape.mjs | BlackHatWorld | Backlink opportunity scraper |

bhw-vendor-verify.mjs | BlackHatWorld | Vendor/service verification |

cdp-batch-bbhf.mjs | BestBlackHatForum | Batch forum crawler |

cdp-bbhf-threads.mjs | BestBlackHatForum | Thread detail extractor |

cdp-buildersociety.mjs | BuilderSociety | Forum crawler |

cdp-buildersociety-v2.mjs | BuilderSociety | V2 enhanced extraction |

scrape-onehack.mjs | OneHack | Content scraper |

📚 Supported Forums (Partial List)

| Rank | Forum | Rating | Cost | Target Audience |

|---|---|---|---|---|

| 1 | GreyHatMafia | 9.5/10 | Free | Everyone |

| 4 | SEO Isn't Dead | 9/10 | Free | SEO Practitioners |

| 6 | BlackHatWorld | 8.5/10 | Free | General Marketing |

| 7 | BestBlackHatForum | 9.5/10 | Free | Recommended: slenderman's posts |

| 8 | 10/10 | Free | Value in comment sections |

📋 Report Example

Generated reports contain three-layer analysis:

1. Edge Knowledge Identification

### Edge Knowledge #1: [Knowledge Title]

**Compressed Expression**: [One-sentence summary]

**Easy Explanation**: [Detailed explanation]

**Viewpoints**: @username: "viewpoint content"

**Risk**: [Potential risks]

**Cost**: [Time/money/learning cost]

**Source Link**: [Original link]

2. Power User Identification

### Power User #1: @username

**Username**: username (forum name)

**High-Energy Viewpoints**: "viewpoint1", "viewpoint2"

**Link**: [User profile link]

3. Resource Extraction

### Resource #1: [Tool/Service Name]

**Name**: Tool name

**Link**: [Tool link]

**Description**: [Feature description]

**Price**: [Price information]

**Review**: [User review summary]

🔧 Technical Architecture

Dependencies

- Claude Code CLI

- browser-use skill / agent-browser skill

- Python 3.8+

Cross-Platform Installer

install.sh auto-detects macOS, Ubuntu/Debian, CentOS/RHEL, and Arch Linux, installs system packages, configures Playwright, and sets up MCP servers.

V2 Additional Dependencies

pip install gallery-dl yt-dlp crawl4ai

Data Flow

User Need → Read memory.json → Recommend Forums → User Confirms →

Check Credentials → Apply Anti-Detection Config → browser-use Crawl →

Claude Analysis → Generate Report → Update memory.json

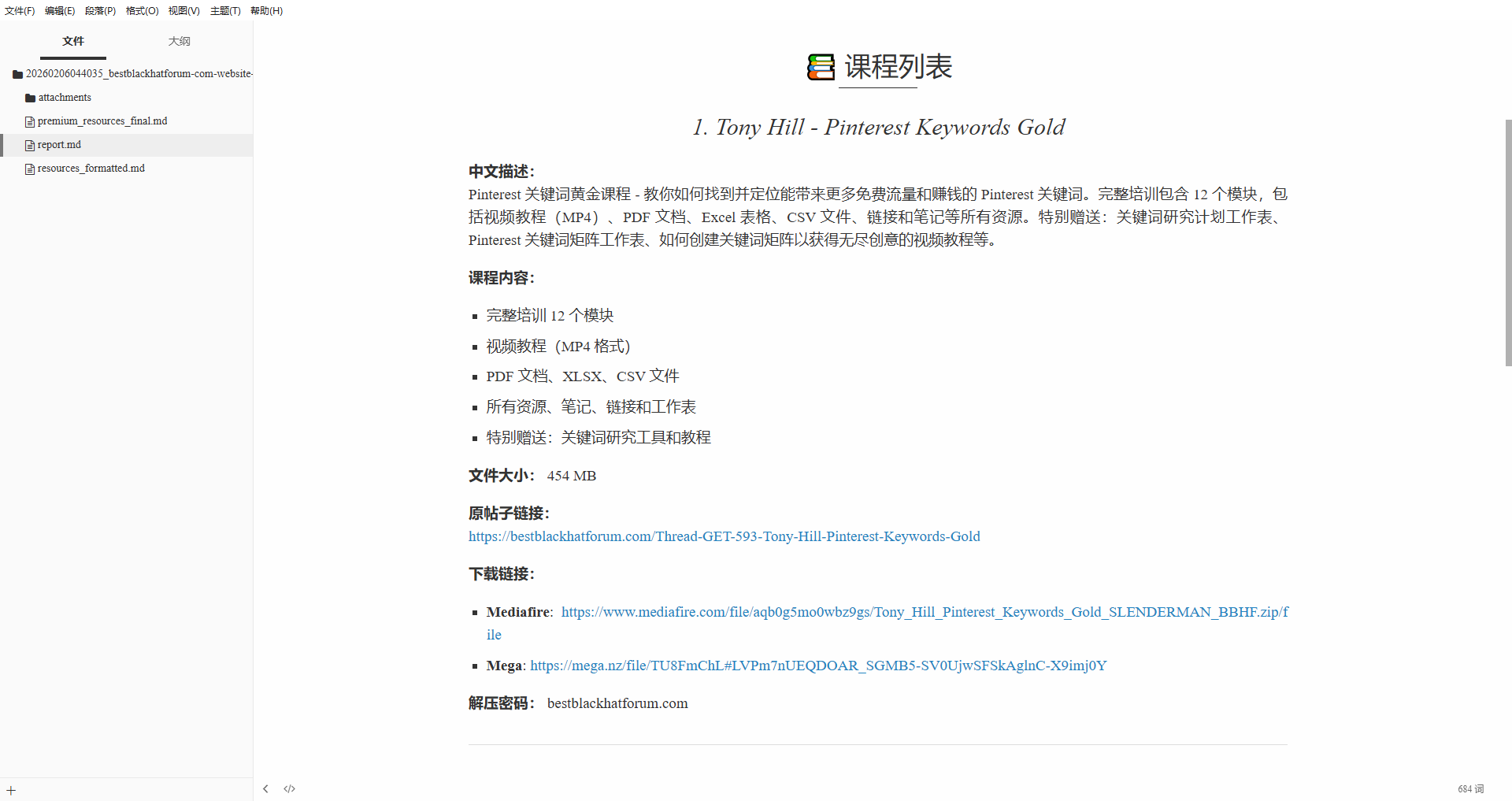

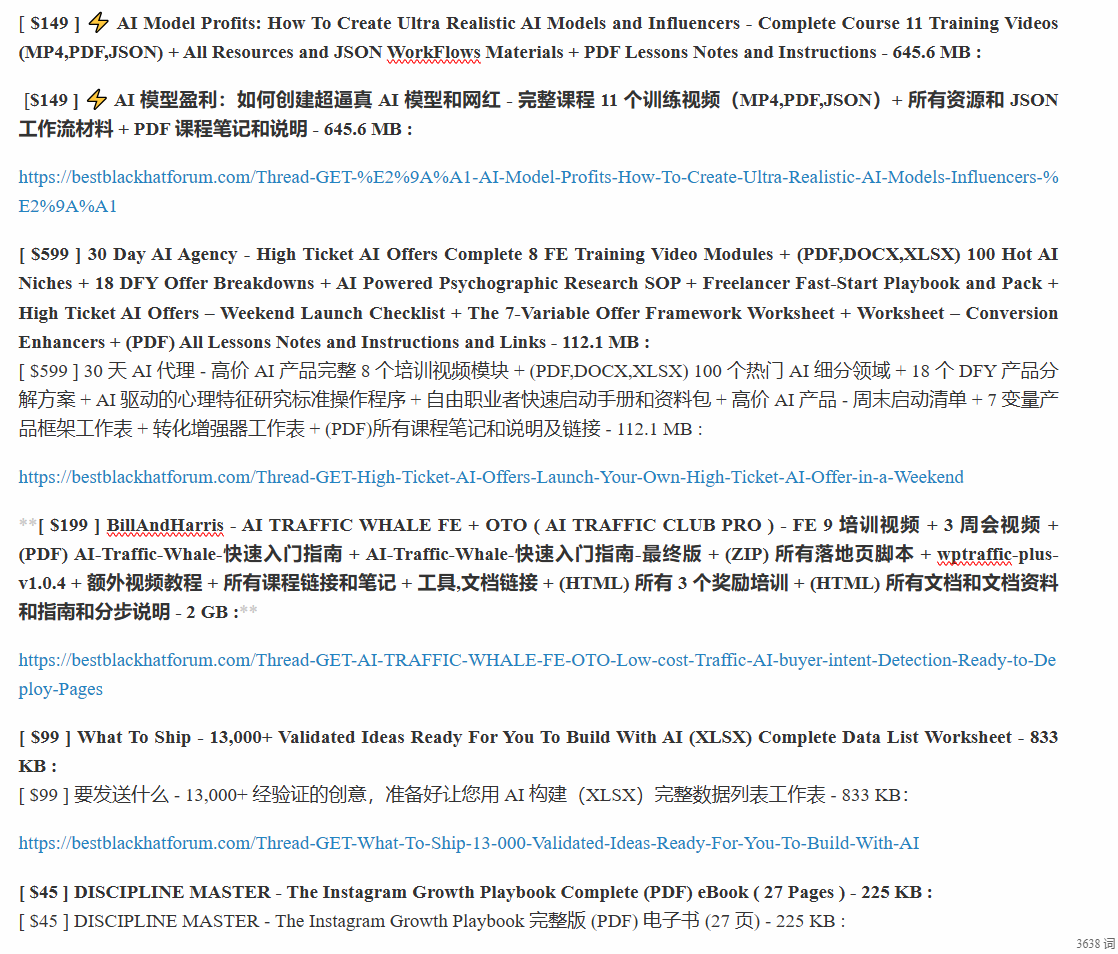

Reference Screenshots

⚠️ Important Notes

- Legal Use - For educational and research purposes only, comply with forum rules and local laws

- Account Security - Use dedicated accounts, avoid using your main accounts

- Anti-Detection - System automatically applies anti-detection strategies, but use cautiously

- Content Risk - Edge knowledge may contain risky operations, use your own judgment

📄 License

MIT License

👤 Author

黑咖啡和冰月亮 / Black Coffee & Ice Moon (@weihackings)

🤝 Contributing

Issues and Pull Requests are welcome!

⭐ Star History

❓ FAQ

Q1: Is this tool legal to use?

A: This tool is for educational and research purposes only. Always comply with forum terms of service and local laws. Use at your own risk.

Q2: Will my account get banned?

A: The tool includes anti-detection features (random delays, session reuse, login limits), but there's always a risk. We recommend:

- Use dedicated accounts, not your main accounts

- Respect the

maxLoginPerDaylimit (default: 3) - Don't crawl too aggressively

Q3: Do I need to provide forum credentials?

A: Only for forums that require login (like BestBlackHatForum). For public forums (like Reddit), no credentials needed. Your credentials are stored locally in memory.json and never committed to Git.

Q4: How do I add my own forums?

A: Edit forum_database.json and add your forum to the appropriate category:

{

"name": "YourForum",

"url": "https://yourforum.com",

"rating": 9.0,

"cost": "Free",

"target_audience": "Your Target Audience",

"tags": ["tag1", "tag2"]

}

Q5: Can I create domain-specific branches?

A: Yes! Fork the repo and create a branch like seo-expert or affiliate. Customize forum_database.json and skill.md for your domain. See our contribution guidelines.

Q6: How do I integrate with Notion/Feishu?

A: This is a community contribution opportunity! You can:

- Fork the repo

- Add integration code to push reports to your collaboration tool

- Submit a PR with your integration

Q7: What's the difference between edge knowledge and common knowledge?

A:

- Common Knowledge: Information easily found via Google/ChatGPT (e.g., "write good content")

- Edge Knowledge: Scarce, risky, or controversial tactics from underground communities (e.g., "PBN networks that bypass Google penalties in 2026")

Q8: How often is the forum database updated?

A: Community-driven! Submit PRs to add new forums or update ratings. We review and merge quality contributions regularly.

Q9: Can I use this for white hat SEO?

A: While the tool focuses on "edge" knowledge, you can customize forum_database.json to include white hat forums and adjust search strategies accordingly.

Q10: How do I report bugs or request features?

A: Open an issue on GitHub Issues with:

- Clear description of the bug/feature

- Steps to reproduce (for bugs)

- Expected vs actual behavior

- Your environment (OS, Claude Code version)

OpenCLI Integration

This local skill now ships with a Windows PowerShell OpenCLI layer:

scripts/setup-opencli.ps1scripts/test-opencli.ps1scripts/invoke-opencli.ps1

Recommended order:

- Install and enable the Chrome extension named

Browser Bridge - Run

scripts/setup-opencli.ps1 - Use

scripts/invoke-opencli.ps1for browser-backed OpenCLI commands - Fall back to the existing

browser-use, MCP, or custom forum flows when OpenCLI does not cover the target workflow